Switch-KD

Visual-Switch Knowledge Distillation for Vision-Language Models

🏢 Open-source release of Li Auto's MindKD technology

Visual-Switch Knowledge Distillation for Vision-Language Models

🏢 Open-source release of Li Auto's MindKD technology

Vision-Language Models (VLMs) have shown remarkable capabilities in joint vision–language understanding, but their large scale poses significant challenges for deployment in resource-constrained scenarios. Knowledge Distillation (KD) offers a viable way to improve model capabilities without increasing model size, making deployment more efficient.

However, applying KD to VLMs is challenged by modality-specific supervision: although multimodal knowledge in VLMs is fused within the language space, current methods supervise each modality separately without explicitly addressing multimodal alignment, leading to inconsistent multimodal knowledge transfer.

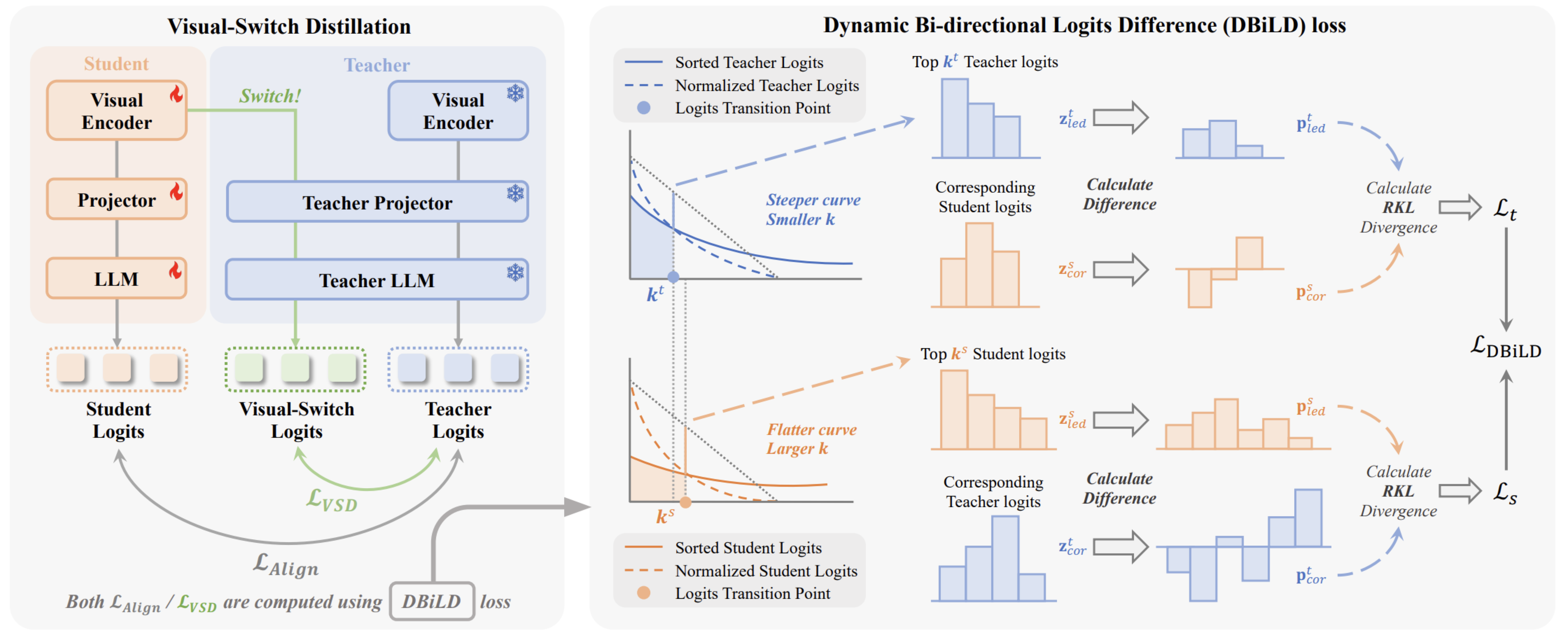

To address this, we propose Switch-KD, a visual-switch distillation framework that unifies vision–language knowledge transfer within a shared text-probability space. Switch-KD comprises two key components:

(1) Visual-Switch Distillation, which switches the student's visual outputs into the teacher's language pathway to construct cross-modal probabilistic references for implicit visual knowledge transfer; and

(2) Dynamic Bi-directional Logits Difference (DBiLD) loss, which adaptively aligns informative probability regions while preserving the distributional structures of teacher and student through bidirectional supervision.

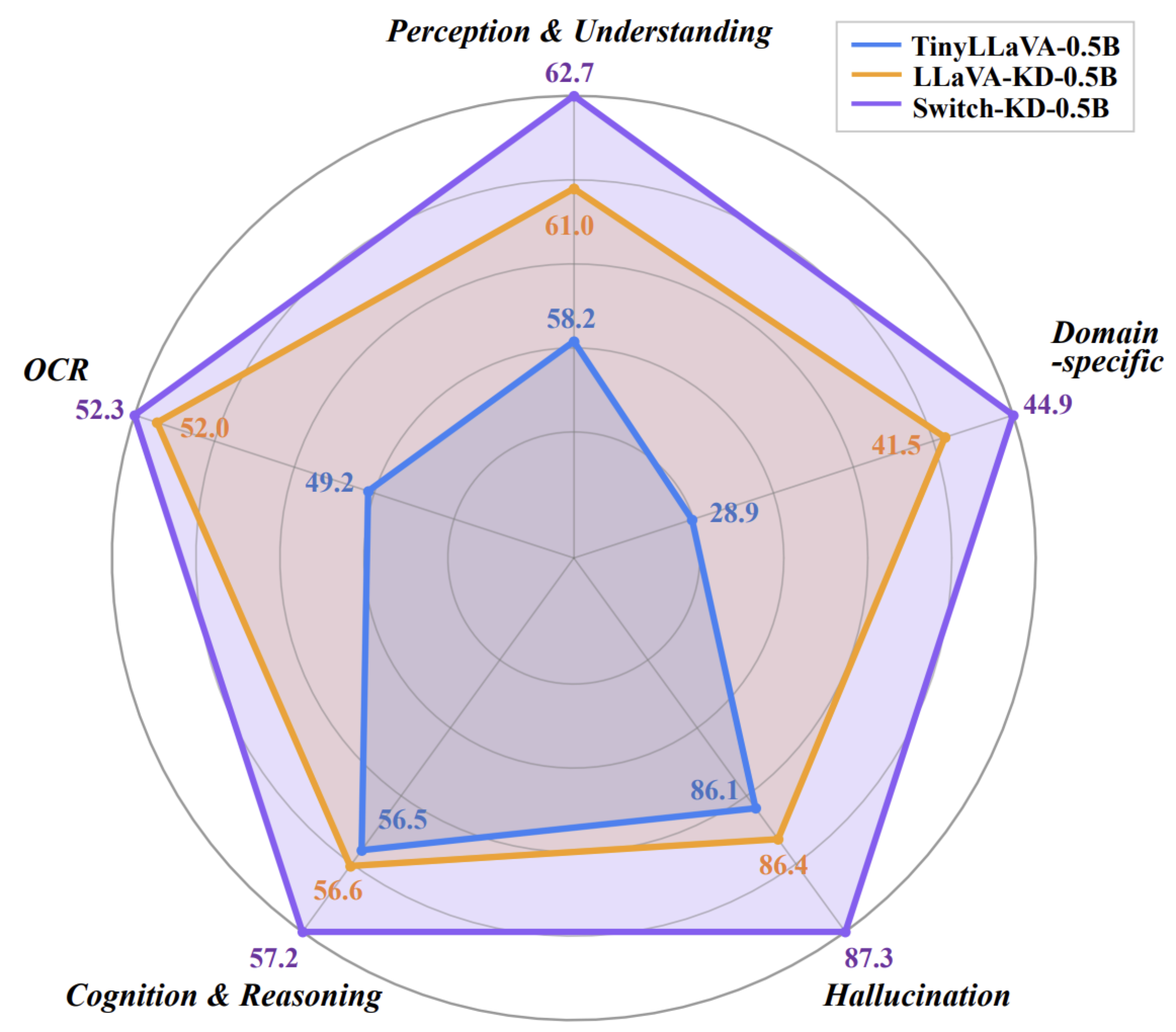

Guided by Switch-KD, a 0.5B TinyLLaVA effectively distills rich multimodal knowledge from its 3B teacher, yielding an average improvement of 3.6 points across 10 multimodal benchmarks without any architectural modification.

Switches the student's visual encoder outputs into the teacher's language pathway, creating a hybrid inference route where the teacher's powerful language modules interpret the student's visual features. This design enables implicit cross-modal knowledge transfer within the unified text-probability space without modifying the student's architecture.

Key Insight: If the student visual encoder learns meaningful representations, its visual features should be correctly interpreted and decoded by the teacher's language modules, enabling effective knowledge transfer through a simple switch mechanism.

Dynamic Bi-directional Logits Difference loss adaptively aligns informative probability regions while preserving the distributional structures of both teacher and student:

Switch-KD achieves state-of-the-art performance on 10 multimodal benchmarks, surpassing existing distillation methods and lightweight VLM approaches.

| Method | Perception & Understanding | Cognition & Reasoning | Other | Avg10 | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| MME | MMB | MMBCN | VQA | GQA | SciQA | MMMU | Text | VizWiz | POPE | ||

| TinyLLaVA | 61.5 | 58.9 | 54.2 | 74.8 | 58.3 | 59.1 | 33.6 | 49.2 | 28.9 | 86.1 | 56.5 |

| LLaVA-KD | 64.7 | 61.3 | 57.0 | 77.7 | 59.8 | 60.6 | 28.3 | 52.0 | 41.5 | 86.4 | 58.9 |

| Switch-KD | 66.8 | 63.5 | 57.8 | 79.6 | 61.6 | 57.9 | 29.8 | 52.3 | 44.9 | 87.3 | 60.1 |

| Method | Performance Metrics | Avg | |||||

|---|---|---|---|---|---|---|---|

| MMEP | MMB | GQA | SciQA | TextVQA | POPE | ||

| MobileVLM V2 | 1289.2 | 55.9 | 59.0 | 64.5 | 52.2 | 86.1 | 63.7 |

| Align-KD* | 1303.8 | 57.5 | 60.1 | 67.7 | 53.1 | 87.0 | 65.1 |

| Switch-KD | 1411.5 | 68.4 | 61.9 | 71.6 | 57.0 | 87.5 | 69.5 |

* Align-KD results are from the long instruction subset (3.6M samples). Switch-KD uses only 1.2M samples with a lighter backbone.

@article{sun2026switchkd,

title={Switch-KD: Visual-Switch Knowledge Distillation for Vision-Language Models},

author={Sun, Haoyi and Wang, Xiaoxiao and Mao, Ning and Wang, Qian and Mu, Lifu and Zheng, Wen and Wei, Tao and Chen, Wei},

journal={arXiv preprint arXiv:2604.14629},

year={2026}

}